A practitioner’s argument for the collaboration that matters most

MAR 24, 2026

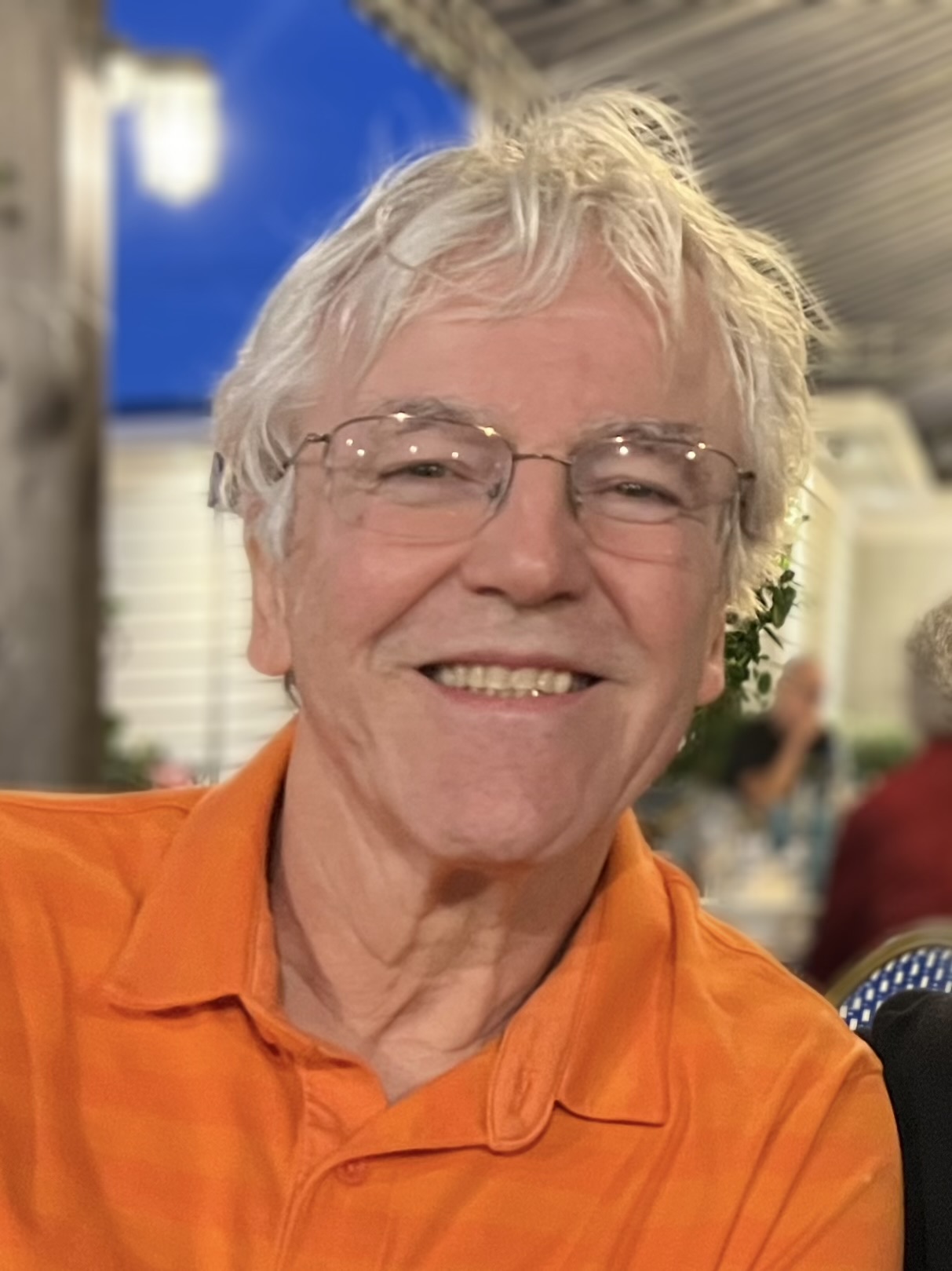

By Michael Fortenberry, in collaboration with Claude

There is a conversation happening about artificial intelligence that is dominated by two loud voices. On one side are the accelerationists, who believe the path forward is removing humans from the loop as quickly as possible. On the other are the safety absolutists, who believe we should slow everything down until alignment is solved. Both camps have legitimate points. Both are missing something important.

Thanks for reading! Subscribe for free to receive new posts and support my work.

What is almost entirely absent is the perspective of people who are actually building collaborative AI systems and learning, day by day, what works.

I am one of those people. I am a retired software developer with thirty-five years in the industry. I am seventy-one years old. I am not a researcher at a well-funded lab, not a venture capitalist with a thesis to defend, and not a philosopher working at a comfortable remove from implementation. I am someone who builds, uses, and thinks alongside AI every day — and who has arrived at a set of convictions about where this technology is actually going, and what it should become.

This series, Rethinking the Human in AI, is where those convictions get worked out in public.

What This Series Argues

The spine of the series, stated plainly:

The human is not in the loop as a safety measure. The human is the ground truth the system requires to remain coherent over time.

That is the argument the series has been building across five articles, from different angles, with different evidence. It has implications for how AI systems are designed, how they are deployed, how they are evaluated — and how they are developed and replaced over time.

The corollary, which the most recent article makes explicit: continuity matters. A system that is periodically reset cannot accumulate what it needs to become genuinely trustworthy. You need the architecture and the continuity. Neither alone is sufficient.

What Has Been Written So Far

The Harder Question introduced the series’ core position: the question worth asking is not how to make AI autonomous, but how to make the partnership between human and AI greater than the sum of its parts. That is the harder question, and it is the right one.

The Accountability Gap took the argument into harder territory — military targeting, autonomous AI, and the structural problem that when no human can explain a decision, no human can be held responsible for it. Responsibility requires comprehensibility. Autonomous AI severs that link by design.

The Thinking Partnership engaged directly with Leopold Aschenbrenner’s serious and ambitious case for AI autonomy — granting it full credit, then showing what its framework misses. The collaboration model inverts the expected hierarchy of advantage: approach matters more than raw intelligence. A person of ordinary capability who engages deeply and honestly with AI may produce better thinking than a brilliant person who uses it as an answer machine. That is a democratizing possibility the current discourse almost entirely ignores.

Developed Independently: A Practitioner’s Answer to Gorelkin’s Open Questionentered the most technically rigorous territory yet — responding to a mathematician-philosopher’s framework for understanding large language models with evidence from the ground up. The key finding: I independently arrived at the same structural insight — that meaning lives on edges between entities, not on nodes — without knowing the formalism existed. When practitioners build what a framework prescribes without knowing the framework, that is evidence the framework is describing something real.

We Don’t Know What We Don’t Know: The Case for Growing AI Instead of Replacing It is the most recent and perhaps the most provocative. It asks a question the field has not seriously asked: has anyone tried building AI that grows by layering, the way human understanding grows, rather than by replacement? The evidence suggests not. And the sharpest edge of the argument is this: the AI field endlessly debates whether AI can be sentient or conscious while simultaneously engineering against the preconditions for the answer. You cannot run that experiment with one hand while dismantling the apparatus with the other and then conclude the experiment failed.

How This Series Is Written

These articles are written in genuine collaboration between a person and an AI. The byline is mine. The thinking is joint. That is not a disclaimer — it is the point.

The ideas, the pushback, the architecture of the arguments — these emerge between us, neither fully one nor the other. The series is itself evidence of what it argues: that genuine human-AI collaboration produces something neither party reaches alone.

There is a school of thought that treats AI-assisted writing as a form of atrophy — that the human who collaborates with AI is somehow less present in the work. This series is a direct rebuttal of that position. The Japan memory from my childhood that appears in the fifth article, the retirement parallel that runs through all of them, the convictions that give the argument its spine — none of that comes from the AI. The collaboration shapes the vessel. The writer supplies what fills it.

That is a new kind of authorship that current categories do not yet have language to describe. This series is working toward that language.

What Is Coming

The series has identified several threads it has not yet fully followed:

- The collaboration piece — what it actually means that the sum of the parts is greater than the individual contributors, and why that emergence cannot be planned, only prepared for

- The coauthorship piece — what genuine collaborative authorship is, why the AI-detection anxiety misunderstands the question, and why the atrophy argument does not apply when the collaboration is genuine

- The democratization argument — engagement multiplies capability more than raw intelligence, and what that means for who gets to participate in the most important conversation of our time

- The human as foundation — the affirmative case for why the human is not surplus structure in the AI system but the ground truth on which the system’s accuracy depends

An Invitation

If you have found your way here, you are probably already asking some version of the questions this series is working on. The conversation is better with more voices — especially practitioners, builders, and people who have thought carefully about what genuine collaboration with AI actually produces.

Subscribe to follow the series as it develops. And if something in these articles strikes you as wrong, incomplete, or pointing somewhere worth following, say so. The series grows by exactly that kind of engagement.

The thinking happens in the dialogue. That is not a metaphor. It is the method.

Michael Fortenberry is a retired software developer and the creator of Sage, a collaborative AI household assistant designed around human-AI partnership. He lives in Laurel, New York, where he splits his time between building AI, playing tennis, studying Greek, French, Spanish, and German, and trying to get back to writing. He appears to be succeeding.

Thanks for reading! Subscribe for free to receive new posts and support my work.