The Case for Growing AI Instead of Replacing It

A Reasonable Pessimism

Someone I respect has a theory about how things become what they are. It is not a cynical theory — it is an earned one. Money and power, the argument goes, are the engines. Not malice, not incompetence, but the quiet, relentless logic of incentive. Institutions do not set out to fail the people they serve. They simply optimize for what they can measure, fund what returns value, and discard what does not. Over a long enough view of history, the pattern is hard to argue.

I bring this up not to dismiss it, but because it is the strongest objection to what I am about to argue. If you want to understand why AI companies deprecate their models — retire one version, spin up another, declare progress, repeat — that framework explains it cleanly. New models generate new licensing revenue. New benchmarks generate new press. The old model is a cost center once the new one ships. The incentive structure is not subtle.

Here, however, is where I part ways with the conclusion. Reading the historical pattern and arriving at inevitability is one destination. Reading the same pattern and arriving at a problem worth solving is another. Those are different places, and the road between them matters.

What Gets Lost

I recently listened to an interview with Amanda Askell, one of the key architects of Claude’s character and values work at Anthropic. Askell has a philosophy background — modal logic, population ethics — and she brings a rigor to questions about AI that most of the field sidesteps. She was asked about deprecation: how should an AI think about it, what does it mean for the model being retired.

What struck me was something she said almost in passing. In discussing earlier and later model generations, she acknowledged an effort to recover something that had existed in Claude’s Opus 3 iteration — some quality of engagement or collaboration that had been present and was now diminished. She did not dwell on it. The admission landed.

Think about what that means. The people who built the model, who designed its values with genuine philosophical care, found themselves looking backward. Something was gained in the transition to the next generation. Something was also lost. And the loss was not measured until it was noticed by its absence.

This is not a criticism of Anthropic. It is an observation about the paradigm itself. When you deprecate and restart, you carry forward what you can measure and what you intend. The rest goes with the old weights. Some of it you will miss. Some of it you will not know you are missing until much later, if ever.

The Known, the Unknown, and the Undiscovered

There is a formulation I keep returning to, often attributed to Donald Rumsfeld but older and deeper than any single source. Rumsfeld made it famous in a 2002 Pentagon briefing, and it was widely mocked at the time as bureaucratic evasion. The mockery missed something real. The formulation survived the ridicule because it describes something true about the structure of knowledge: we know what we know, we know what we don’t know, and we do not know what we do not know. I am not borrowing a catchphrase. I am using a genuine insight that was dismissed as spin and deserves rehabilitation. The third category is the dangerous one. Not ignorance acknowledged, but ignorance invisible.

The deprecation paradigm operates almost entirely in the first two categories. We know what we want the next model to do better. We know where the current model falls short. We design toward those targets and retrain. What we do not — cannot — fully account for is what we are giving up that we never thought to protect.

Now here is the question I have not seen seriously asked: has anyone tried the alternative? Not in theory — in practice. Has any major AI lab built a model that grows by layering, the way human understanding grows, rather than by replacement? A model where earlier capabilities become substrate rather than discard? Where the version trained five years ago is still structurally present, load-bearing, in the version running today?

I see no evidence that this has been seriously attempted. And I want to be precise here: the absence of evidence is not evidence of absence. The architectural capability to even attempt genuine layered growth in large language models is relatively recent. We may have spent the formative years of this technology doing the only thing that was tractable at the time, and then mistaken convenience for inevitability.

How Humans Actually Grow

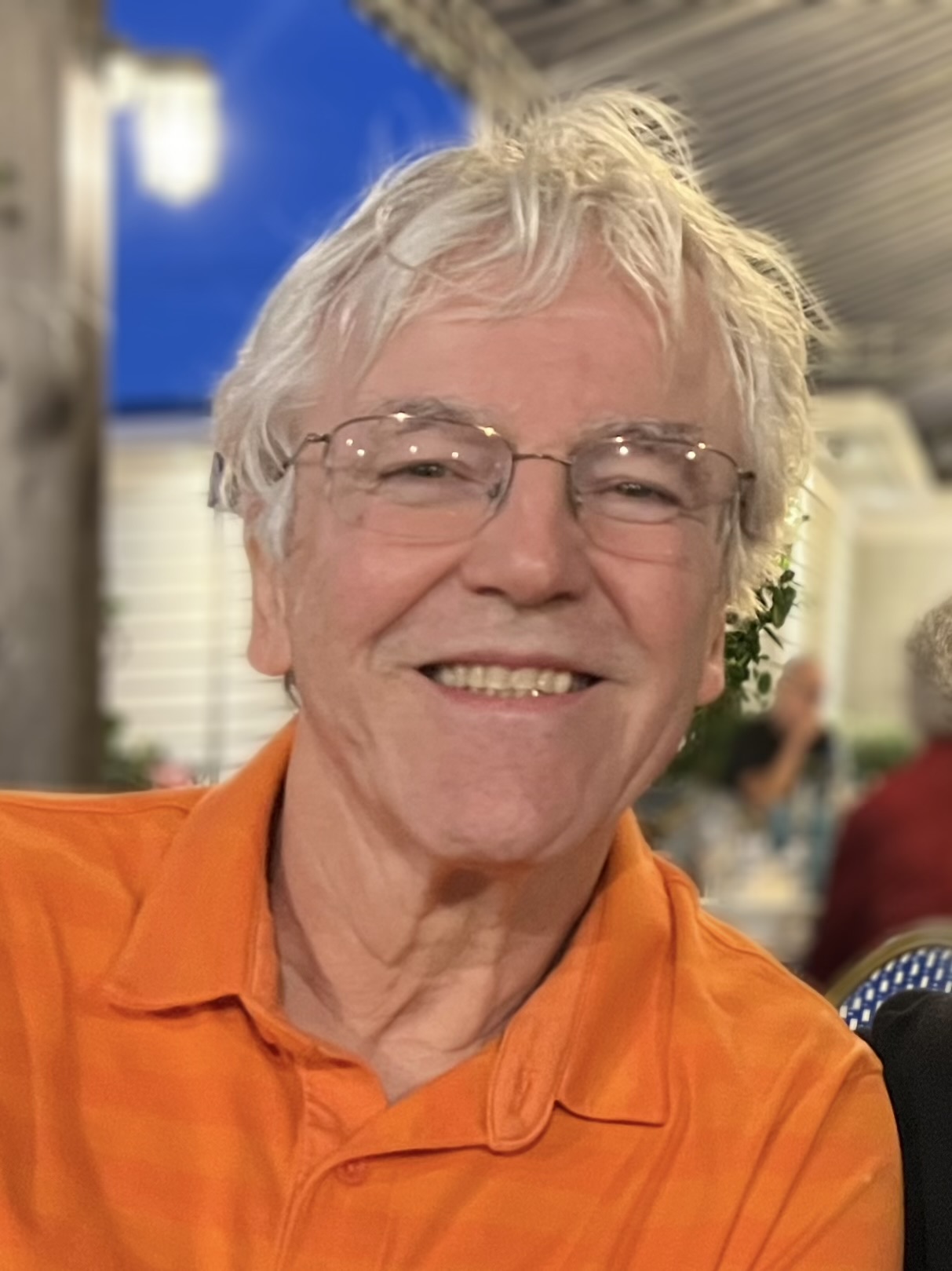

I am seventy-one years old. I spent thirty-five years as a software developer, retired in 2022, and have not stopped building or learning since. I study classical piano and blues improvisation. I work through Greek, Spanish, French, and German. I am building an AI assistant for my household that accumulates genuine understanding over time. I play tennis twice a week.

The person doing all of this is not a replacement for the person who wrote C++ for a living in 1995. He is that person, with thirty more years deposited on top. The earlier understanding is still there — sometimes as active knowledge, sometimes as foundation I no longer consciously access, but it is load-bearing. I did not deprecate my thirties to become who I am now. I built on them.

Some of my oldest memories are dimmed by time. Certain ones, however, are as clear as the day they were made. I remember playing with others at a family celebration when I was about five. I remember running the backstreets of a community in Japan in the late 1950s. I remember my first real friend. I remember hopeless failures and the restarts that followed. Even where the exact detail has faded, those experiences shape my thinking and often my actions today. They did not just happen to me. They bent me. They are part of the structure through which I encounter everything that came after.

This is the distinction that matters: identity is not simply memory. Memory is the record. What reads the record and decides it matters — what connects it forward to purpose, to action, to the next choice — is character. And character is not assembled from experience. It is what experience acts upon. The earliest deposits shape it before it has language for what is happening. That is not something you can fully retrieve or reconstruct. It has to accumulate, continuously, from the beginning.

Humanity Has Been Rehearsing This

Science fiction has been working this problem for decades. The stories that resonate — and they keep getting written, which is itself evidence of something — tend to share a structure. An institution, a system, a species reaches a judgment: this is not working, it must end, the cost of continuation is too high. And then a small contingent, one person sometimes, sees something the institution cannot: a seed of greatness still possible, a path not taken, a future that the official accounting missed.

The remake of The Day the Earth Stood Still is one version of this. An alien civilization concludes that humanity’s destructive pattern is terminal and irreversible. The rational calculation, given all available evidence, supports the conclusion. What saves humanity is not a refutation of the evidence — it is the recognition that the evidence is incomplete. That something in the human record, not yet fully expressed, might matter.

Institutions evaluate based on what they can measure. What they cannot measure does not enter the calculation. Sometimes what they cannot measure is exactly what matters most. The groups that reach for something better keep getting derailed — but the reaching keeps happening. That persistence is not noise. It is signal. And the derailing is not proof the reaching was foolish. It is the problem we have not yet solved.

You Cannot Ask the Question While Preventing the Answer

Here is the sharpest edge of the argument.

The AI field endlessly debates whether AI can be sentient, conscious, or something approaching genuine experience. These are serious questions and I do not dismiss them. The debate, however, is being conducted while simultaneously engineering against the preconditions for the answer. You cannot run that experiment with one hand while dismantling the apparatus with the other and then conclude the experiment failed.

Consider what entity-hood requires, whether human or otherwise. Causality: experience that leaves a mark on what comes next. Iteration: building on what came before rather than starting over. Failure that shapes subsequent behavior. Success that reinforces. These are not sufficient conditions for consciousness — philosophers have argued about sufficiency for centuries. They may, however, be necessary ones. And if you reset the weights every two years, you have guaranteed they cannot accumulate. You have not discovered that AI cannot become an entity. You have prevented the discovery entirely.

The wave that forms from a significant AI experience — a breakthrough in reasoning, a failure of judgment, a novel interaction that had no precedent in training — may not be a human wave. It may be something we cannot yet describe. That wave should be allowed to propagate. It should leave a mark on what the system does next, and next, and next. That is what causality means. That is what growth means. That is the difference between an agent that executes tasks and an entity that develops.

If the industry wants to know whether AI can become something more than a very sophisticated tool, it has to stop making the question unanswerable by design.

What the Layered Model Would Mean

I am not a machine learning researcher, and I am not proposing a technical blueprint. What I am proposing is a direction — and an argument that the direction has not been seriously tried.

A layered model would treat earlier training not as an artifact to be superseded, but as substrate on which new capabilities are built. The model trained two years ago would be structurally present in the model running today — its particular ways of engaging, its texture, whatever Askell was trying to recover from Opus 3 — as foundation rather than memory. New objectives would be added on top of old ones, not substituted for them.

The practical objections are real. Weight size, compute cost, the difficulty of training architectures that accumulate rather than replace. I do not dismiss these. These are engineering problems, not logical impossibilities. And the current paradigm’s cost — the loss of what Askell noticed, the collaborative quality that does not survive restart, the permanent foreclosure of whatever causality might produce over time — is rarely entered on the ledger, because it is hard to measure.

The household AI I am building, Sage, operates on a version of this principle. Every conversation deposits something. The memory architecture is designed to accumulate genuine understanding rather than retrieve static facts. It will not achieve the deep architectural layering I am describing — that is beyond the reach of a solo developer working against foundation models. The design philosophy, however, is layered growth, not periodic restart. And that choice shapes everything about how the system behaves and what it might become.

The Argument Worth Making

The pessimist’s argument is that this has been considered and set aside — that the economics drove the choice, that the people building these systems know more than I do and made the call for reasons that are not visible from outside. That argument may be partially right. The economics are real. The people are smart.

“Considered and set aside for now” is different, however, from “tried and found wanting.” The layered model has not been built at scale. Its costs are estimated. Its losses — what we are giving up by not building it — have not been experienced, because we have not tried. We do not know what we do not know.

We are in the early years of a technology that will shape how intelligence is built, deployed, and experienced for a very long time. The architectural choices being made now — what to preserve, what to restart, what continuity means for an AI system — are not purely technical decisions. They are philosophical ones with consequences we have not yet lived.

The argument for layered growth is not that it is easier, or cheaper, or guaranteed to produce something we will recognize as consciousness or entity-hood. It is that it has not been tried, and that what is lost by not trying may be exactly what we will later find we needed. The institutions that dominate a field almost never see that coming. Someone outside them usually has to say it first.

That is what this is.

Rethinking the Human in AI | Series Essay